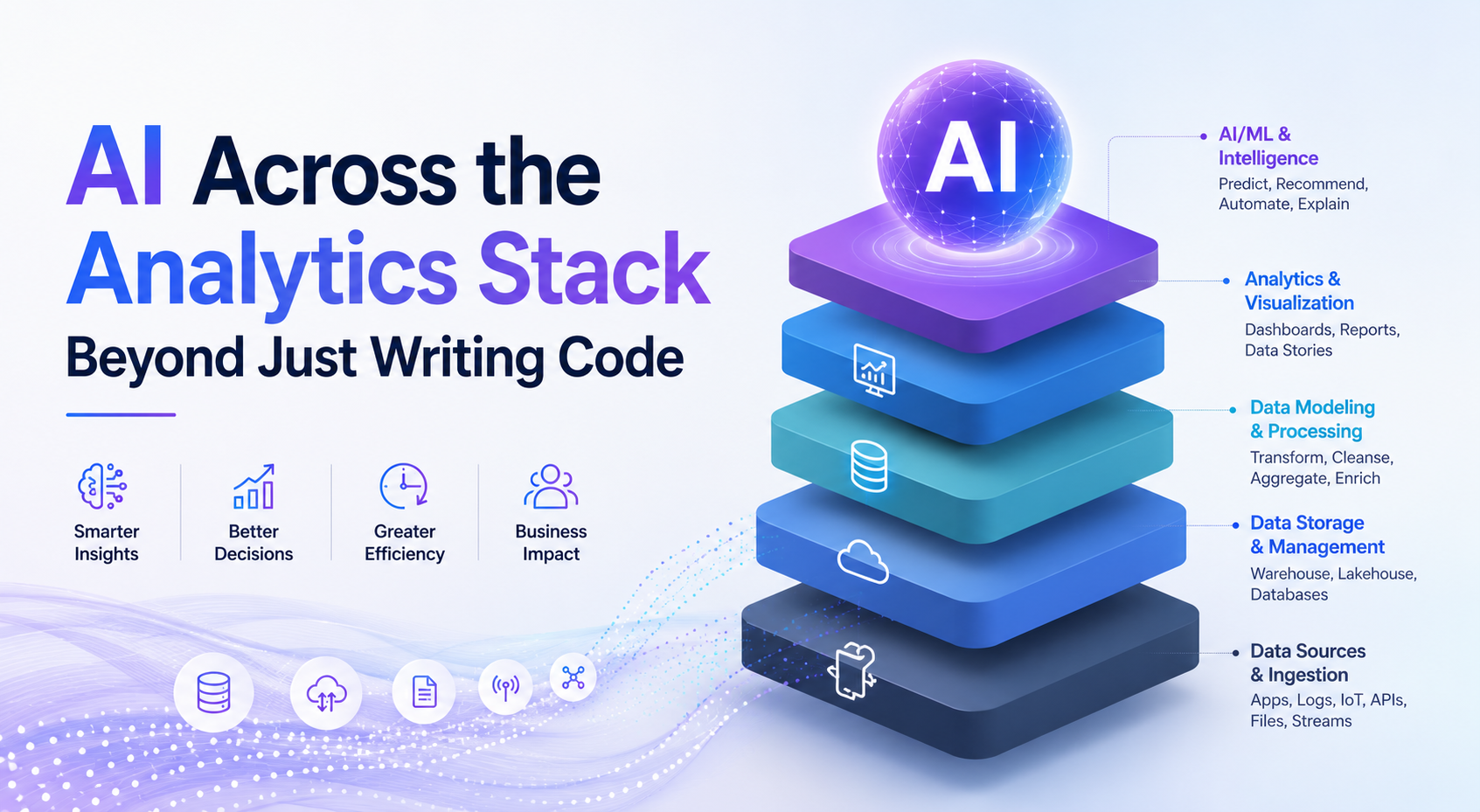

The conversation around AI in data science has long been limited to code assistance and smarter development tools. That framing no longer reflects what is actually happening across the analytics stack.

In 2026, AI operates across every phase of the analytical process, not just code generation, from data collection and engineering to pipeline management, model validation, and stakeholder reporting.

According to a March 2026 Mordor Intelligence report, the global data science platform market is projected to reach $132.19 billion in 2026, growing to $284.37 billion by 2031 as enterprises shift from isolated machine learning pilots toward integrated production systems that connect data ingestion, model training, and governance.

That shift is not just a market story. It reflects a fundamental change in what AI is being asked to do inside organizations and what data science professionals need to understand to keep pace with it.

Where AI Actually Shows Up in the Data Science Workflow Beyond Code Generation

Most data science projects stall before they reach the modeling stage, held back by poor data quality, unreliable pipelines, and unclear problem definitions. These are precisely the areas where AI is now contributing most visibly.

| Workflow Stage | What is AI Doing Today? |

| Problem Definition | Identifying scope gaps, project risks, and data inconsistencies using insights from previous project experiences and historical datasets. |

| Data Collection | Structured and unstructured data ingestion from multiple sources without much manual effort. |

| Data Engineering | Running automatic anomaly detection, schema recommendations, dataset profiling, and data quality checks. |

| Data Pipelining | Automating manual ETL tasks with AI-generated pipeline creation, scaffolding, testing, orchestration, and monitoring. |

| Feature Engineering | Providing feature transformations and creating features from the relations between features and target variables. |

| Modeling | Automation of hyperparameter tuning, model selection, scalability optimization, and cross-validation processes. |

| Monitoring | Real-time detection of data drift, concept drift, pipeline degradation, and performance problems in production systems. |

| Reporting | Interpreting and communicating the results of complicated model outputs to non-technical users. |

As per USDSI® Insight on A Data Science Workflow Exhibit for Project Success, a well-defined data science workflow is the backbone of any organization’s shift toward data-driven decision-making. It enables teams to move systematically from problem identification through model deployment and performance optimization.

Data Engineering and Pipelining: The Biggest Shift

Data engineering has historically absorbed the majority of a project’s timeline. Schema design, connector maintenance, null value handling, and pipeline debugging all determine whether a model has reliable data to work with, yet these tasks rarely feature in conversations about data science progress.

AI tooling has meaningfully reduced that overhead. Modern platforms can infer schemas, suggest join logic, surface data quality issues before they reach downstream processes, and auto-generate documentation and unit tests for transformation steps.

What Remains Beyond Automation

As efficient as the tooling may get, there are 3 areas where direct human judgment is still needed.

- Domain knowledge is the determining factor on whether a model is solving the right problem, not the right metric.

- While automated summaries are useful, the interpretation needed to communicate with the stakeholders cannot be automated and must be done in context.

- Ethical oversight involves tradeoffs around bias, fairness, and accountability that no automated check can resolve on its own.

Top Data Science Certifications Worth Considering for Upskilling

For professionals looking to build structured competency across the full data science lifecycle:

- Certified Senior Data Scientist (CSDS™) by USDSI®

A self-paced professional certification for professionals looking to command the full data science workflow, it covers advanced ML algorithms, big data ecosystems and data lakes, strategic data science for business, cloud computing, and data visualization, the exact competencies needed to oversee AI-augmented pipelines at a senior level.

- Certificate in Data Science by UC Berkeley Extension

A professional certificate that covers data science fundamentals, machine learning, Python, and statistical analysis, building the technical grounding required to work effectively alongside AI tools across the data science lifecycle.

- Certification of Professional Achievement in Data Sciences by Columbia University

A non-degree professional certification delivered directly through Columbia University’s own Columbia Video Network. Its four required courses in algorithms, machine learning, and exploratory data analysis equip practitioners with the core skills needed to evaluate, interpret, and govern AI-driven outputs across the full analytical workflow.

The Way Forward

Organizations today expect data science teams to own the full analytical chain, not individual stages of it. Professionals who understand where AI accelerates each part of that process, and where human judgment remains essential, are the ones best positioned to deliver consistent, trustworthy results. That breadth of understanding, grounded in both technical fluency and domain awareness, is what defines the data science practitioner this field now requires.

FAQs

Can AI replace the need for a dedicated data scientist on smaller teams?

Not reliably, AI can automate execution tasks, but smaller teams still need someone who can frame the right business problem and interpret results in context.

What programming languages are most relevant for working alongside AI-assisted data science tools today?

While Python continues to be the language of choice, the ability to work with SQL is also becoming increasingly relevant as AI tools are moving more towards the data layer, bypassing the modeling layer.

What technical skills should a data scientist prioritize to stay effective as AI handles more of the workflow?

The need for statistical reasoning, data storytelling, and critical evaluation of model output is greater than ever in technical skills.